Particle Nodes Core Concepts¶

In this document I’ll outline my current (2019-10-14) view of how particle nodes should look like in Blender. The most important design goal is the following. Everything else follows from it.

Users should be able to work on the abstraction level that is relevant to them.

What this means is that we realize that different groups of users require different workflows. Some users need to have precise control over everything, while others only want to combine high level functions. Some create long simulations, while others use particles just to distribute points in the first frame. Some create simulations with 10 particles, while others have millions of particles.

All of these use cases are perfectly valid and should be supported by Blender. The difficulty is to design and implement a node system that supports different workflows. Users working on either end of each spectrum, should not have to care about the other end too much.

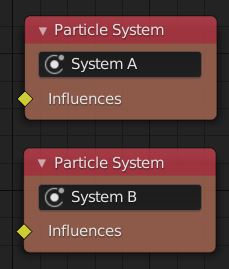

Particle System¶

A particle system is a named collection of particles. All particles in the same particle system have the same set of attributes and follow the same set of rules during the simulation. This is roughly equivalent to how the term “particle system” was used in older versions of Blender.

A particle simulation (or later maybe just “simulation”) can contain multiple particle systems that can interact with each other.

In the node tree, each particle system is represented by a Particle System node. These nodes act as “final” nodes in the node tree, because they have no output. They only have a single “Influences” input. This input socket is different from all other sockets we have in Blender currently. It allows that multiple other sockets are connected to it. This socket is the key to supporting different workflows in a single node tree.

Influences¶

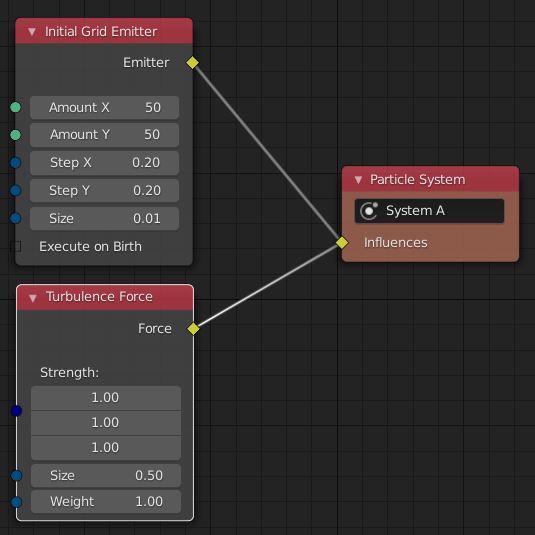

Blender will provide many nodes that describe the behavior of particle systems. For example an emitter and a force influence the way a particle system behaves when being simulated. An arbitrary number of influences can be added to each particle system.

Different nodes representing influences can work on different abstraction levels. For example, a Gravity Force node is easy to use, but does not give a lot of flexibility. A Custom Force node on the other hand can allow the user to use math nodes to compute a force for every particle. An even more low level Custom Simulation Step influence node could allow the user to use math to compute updated particle attributes directly from the last simulation state.

At this point one can start to see a problem. Influences can conflict with each other. When users want to implement the entire update function themselves, their update function might not be able to incorporate force influences. A conflict can also happen when two separate influences try to enforce constraints on particles that cannot work at the same time. Fortunately, in most cases it should be obvious when influences are incompatible. In those cases, the solver either tries to somehow combine the influences or issues an error and waits until the user has resolved the conflict.

Uber Solver¶

Depending on which influences a particle system has, different algorithms have to be used to simulate it. For example, when there is just an emitter and a force, a much simpler algorithm can be used compared to when granular materials are simulated. Yet another solver might be necessary when some kind of AI is used to simulate particles. I do not believe that there is a single solver that is capable of simulating all these kinds of simulations at the same time efficiently.

The most practical thing we can do is to select the best solver based on what influences are involved in the simulation. This is what the “uber solver” is doing. It parses the user provided node tree, collects all the influences and delegates the actual simulation work to other solvers.

To be able to do its job, the uber solver has to understand what every influence does. That does not mean however, that it has to know all the different influences that exist. In many cases, influences can be grouped behind common interfaces. Then the uber solver only has to understand the interface, and not every influence. Examples for such interfaces are emitters, events and forces.

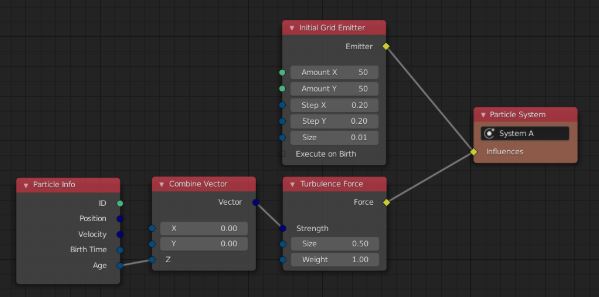

Particle Functions¶

In the picture above we see that the Initial Grid Emitter and the Turbulence force node have input sockets. At first glance, they look them same (because they do currently). However, there is an important difference between them: The inputs of the turbulence force node can differ for different particles. For example, users might want that the strength of the turbulence depends on the distance to some mesh. The inputs of the Initial Grid Emitter node are global and not per particle.

A particle function is some node tree that ends in an input socket that is allowed to differ for each particle. Particle specific data can be accessed using special input nodes (similar to shading nodes). Using the same approach, other data like vertex weights and image data can be used to influence the simulation. Furthermore, this allows fairly simple influences like the Turbulence Force node to become quite powerful.

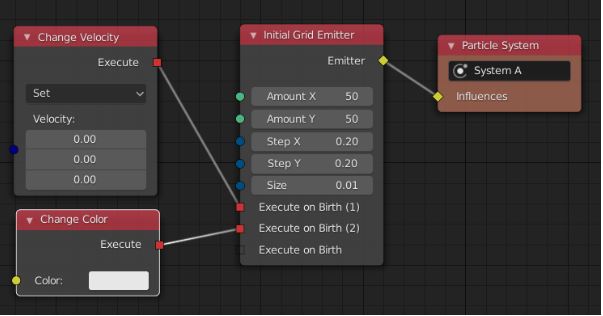

Actions¶

Some influences provide an additional customization point in the form of Execute inputs. Those allow the user to pass additional orders to the influences that have to be executed under certain circumstances. For example, the Initial Grid Emitter has an Execute on Birth input. Every action plugged into there is executed for every particle directly after it has been created. This allows the user to initialize other attributes like velocity and color. All actions are executed in a well defined order.

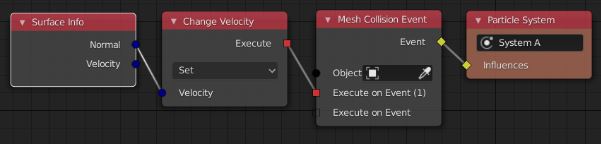

An action is always executed for individual particles. So nodes like the Particle Info node can be used to control e.g. the input of the Change Velocity node. Furthermore, more context information might exist when an action is executed. For example, when an action is executed in the context of a mesh collision event, additional information like the collision position and normal can be accessed.

Summary¶

The presented topics are just the core concepts I have in mind for the node based particle system. None of these core concepts makes assumptions about the kind of particle simulation that is created. In fact, it might be possible to use the same concepts for other kinds of simulations. However, that has to be evaluated again in the future.

Influences and the Uber Solver allow users to work on the abstraction level that best fits their use case. Particle Functions and Actions allow users to specify complex behavior using nodes in a fairly concise way. Many features like Particle Groups can be implemented on top of these concepts.

I do have a work on progress implementation of all of these concepts. However, quite a lot of refactoring has to be done still. This is because 1) large parts of the implementation were developed when these core concepts did not exist and 2) I learned a lot new stuff with regard to C++, performance, particle systems and more. Therefore, I’d solve some problems differently nowadays to get a cleaner and more efficient implementation.

Just because the concepts explained above support many different ways to define a particle simulation does not mean, that the initial implementation in Blender will support every use case. The order in which use cases will be supported depends on multiple factors. The most important one currently is to make it a viable alternative to the old particle system, so that we can remove it.

Personally, I’m quite happy with this solution. So thanks to everyone who helped to develop it. I’m currently not aware of any major issues. Please talk to me, if you think differently.